👉 Try MindtrixAI’s Free LLMs.txt Generator & AI Humanizer Tool Now →

You probably walked past someone today wearing AI smart glasses. They looked completely ordinary. But underneath those frames was a live camera, a microphone, and an AI model processing everything around you — including you — in real time.

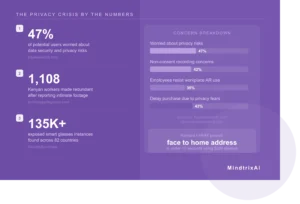

The debate around AI smart glasses vs privacy is no longer a future concern. It is happening right now. Real people have had their most intimate moments silently filmed and reviewed by strangers on the other side of the world. Harvard students proved that anyone can be identified by name and home address using a $299 pair of off-the-shelf glasses in under ten seconds. Workers who reported what they saw were fired within days.

This guide covers every side of that story — the technology, the real scandals, the law, what Reddit and Gen Z are actually saying, and what you can do about it. No hype in either direction. Just the full picture.

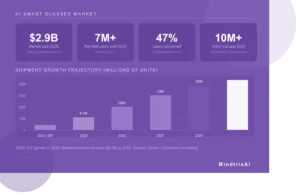

- The AI smart glasses market hit $2.9 billion in 2025 and is growing at 47% CAGR toward $8.4 billion by 2035

- Meta sold over 7 million Ray-Ban AI pairs in 2025, tripling its prior year volume

- A Harvard experiment showed anyone can be identified by name and home address through smart glasses in seconds

- Kenyan workers at a Meta subcontractor watched intimate footage of wearers — 1,108 lost their jobs for speaking up

- 47% of potential users are already concerned about data security risks from camera-enabled glasses

- The EU AI Act fully applies August 2026, banning real-time facial ID in public spaces

- There is currently no universal right to opt out of being recorded in public

The AI Smart Glasses Boom Nobody Is Slowing Down

Before we get into the problems, the scale of what we are dealing with needs to be clear.

According to Omdia research, AI glasses made up 78% of total global smart glasses shipments in H1 2025, up sharply from 46% in H1 2024. The category is on track to ship 5.1 million units in 2025, a 158% year-on-year increase, with projections reaching 35 million units by 2030 at a 47% compound annual growth rate.

Meta alone reportedly sold more than 7 million Ray-Ban pairs in 2025, more than tripling its prior-year volume. The product line now includes prescription models, meaning these devices are moving from novelty to something millions wear all day, in every space they enter.

The conversation about AI smart glasses vs privacy cannot wait until the technology matures further. Seven million cameras are already walking around on people’s faces. That is the reality we are navigating right now.

📊 Market fact: The XR market shipped 14.5 million devices in 2025, up 41.6% year over year, with smart glasses driving almost all of that growth and accounting for roughly half of all XR shipments worldwide for the first time. — Treeview Research, 2026

“AI smart glasses raise significant privacy concerns.” — Kleanthi Sardeli, lawyer, European digital-rights group NOYB, via Glass Almanac

🔍 MindtrixAI Finding: The AI smart glasses market is growing faster than the regulatory and social frameworks designed to govern it. A technology that ships 10 million units in 2026 while operating under a patchwork of privacy laws is a technology that has outrun accountability.

What Do These Glasses Actually Capture? (More Than You Think)

Most people imagine AI smart glasses as a hands-free assistant. Ask it a question, it answers. Take a photo, hands-free. Helpful, harmless, convenient.

The reality is more layered. A typical pair captures continuous video or still images, records audio, processes voice commands, and connects all of that to cloud services for analysis and storage. Cybersecurity experts agree on one fundamental truth: data in transit is data at risk. When AI smart glasses capture your environment, they do not process everything locally on the frames. They send biometric data, voiceprints, and visual feeds to an app on the wearer’s phone, which syncs it to a remote cloud server.

AI glasses can capture sensitive data including facial features, voiceprints, eye-tracking patterns, and images of people who are identifiable. Under laws like the GDPR, Illinois’ BIPA, and California’s CCPA, this qualifies as biometric personal data — carrying stricter expectations around notice, consent, retention, and use.

⚡ Quick Fact: Voice recordings triggered by the Meta glasses wake word are stored in the cloud by default and can be kept for up to one year. As of April 2025, Meta removed the ability to opt out of cloud storage beyond manual deletion. — Help Net Security, February 2026

Here is what most product pages quietly skip: the recording indicator light on Meta Ray-Ban glasses was designed to inform the people around the wearer, not the wearer themselves. And while Meta says the light cannot be disabled without violating its user agreement, the Sama investigation revealed workers saw footage where users appeared unaware their glasses were recording at all.

The Harvard Experiment That Proved Anyone Can Be Identified

In October 2024, two Harvard students proved something most people were not ready to hear.

AnhPhu Nguyen and Caine Ardayfio used a pair of Ray-Ban Meta Smart Glasses and built software they named I-XRAY. It worked like this: the wearer taps the frame to photograph someone, which triggers a bot that pushes the image through PimEyes (a public reverse image search tool), finds matching faces from across the internet, then cross-references those results with people-search databases to pull a name, home address, and phone number.

They demonstrated it on strangers on a subway platform. Name. Home address. Phone number. In seconds.

“Basically, why we did this was to raise awareness of how much data we have just publicly available,” said Nguyen. They have not released the software. But they say an open-source version is a matter of time.

According to Purdue Global Law School’s privacy analysis, federal law in the United States does not bar the use of facial recognition systems. Only a few states — most notably Illinois — have laws that forbid collecting facial data without the subject’s written permission.

“Privacy in public is probably dead.” — Forbes, reporting on the Harvard I-XRAY demonstration

🔍 MindtrixAI Finding: I-XRAY used no hacking, no proprietary tools, and no special access. It used $299 consumer hardware and databases anyone can search. The demonstration did not reveal a product flaw — it revealed a flaw in the assumption that public spaces are private enough.

The Meta and Sama Scandal: When Workers Watched Strangers Undress

This is the story that made the abstract concrete, and it deserves more attention than it has received.

In February 2026, Swedish newspapers Svenska Dagbladet and Goteborg-Posten published an investigation revealing that private footage from Meta Ray-Ban glasses users was being reviewed by human workers at Sama, a Kenya-based data annotation firm contracted by Meta.

Workers reported seeing sensitive content including nudity, sexual activity, people using bathrooms, bank card information, private messages, and medical conversations. “We see everything, from living rooms to naked bodies,” one worker told the newspapers.

In one documented case, a man’s wife undressed after he left the glasses running on a table. He was not recording intentionally. The device was passively running. And someone in Nairobi was watching.

Meta’s response made things worse. Two months after the allegations, Meta terminated its entire contract with Sama, making 1,108 workers redundant with only six days’ notice. Many were already involved in a $1.6 billion lawsuit over prior content moderation trauma.

⚠️ Legal update: A class-action lawsuit was filed in the US by plaintiffs in California and New Jersey. The UK’s ICO wrote to Meta calling the situation “concerning.” Kenya’s data protection authority opened a formal investigation. — The Next Web

“When the AI is attached to a camera worn on someone’s face throughout the day, the training data is their life.” — The Next Web

🔍 MindtrixAI Finding: Meta’s privacy policy disclosed that data could be reviewed by AI systems. It did not disclose that human workers in another country would review intimate footage with no mechanism to protect what they saw. That gap between the policy and the practice is exactly where trust is lost.

Real Incidents at Street Level: The Wax Center, the Campus, and a Million Views

The Harvard experiment was a controlled proof of concept. The Sama story was a corporate accountability failure. But the incidents happening in everyday settings are the ones that affect ordinary people — and they keep accumulating.

On TikTok, a young woman shared a video saying she went to a European Wax Center in Manhattan for a Brazilian wax, only to find her aesthetician wearing Meta Ray-Bans equipped with a camera. The batteries were not charged, the aesthetician claimed. But the experience went viral, triggering a wave of concern about when or whether recording in intimate service settings is acceptable. The Washington Post covered the broader backlash in August 2025.

In October 2025, the University of San Francisco issued a warning after reports that a man wearing Ray-Ban Meta smart glasses was approaching women on and around campus and recording interactions that were later shared on social media.

One woman said she was approached on a walk, had a conversation with a man wearing glasses that looked like ordinary sunglasses, and only later discovered a video of her had been posted online with nearly a million views.

💡 Did you know? A coalition of 70 organizations formed to press Meta over facial recognition plans in 2025, including civil liberties groups, digital rights advocates, and academic researchers who argue always-on camera devices should require explicit opt-in consent from bystanders. — Glass Almanac

“For women, children, and vulnerable communities, this isn’t about convenience. It’s about control. About power. About whether you can walk through the world without being turned into content.” — Cybersecurity Advisors Network, Not a Good Look, AI

Exam Halls, Boardrooms, and Workplaces: The Hidden Cheating Problem

The AI smart glasses vs privacy debate does not stop at public spaces. It extends into every controlled environment built on the assumption that you cannot hide useful technology in plain sight.

According to Times Higher Education, researchers warn there is “no defence” against wearable AI in exams. A student wearing AI glasses that look identical to prescription frames could receive real-time answers, access notes, or stream their exam paper to an AI assistant, invisibly and silently, with nothing a room supervisor could detect.

Traditional anti-cheating measures assumed students could not hide useful technology in plain sight. AI smart glasses break that assumption entirely. Metal detectors cannot distinguish electronics inside frames. Requiring students to prove their glasses are non-smart is not technically feasible at scale. And banning glasses outright is not a reasonable accommodation for students who genuinely need them.

📊 Workplace stat: Around 35% of employees already resist using AR glasses due to fears of monitoring and workplace surveillance. That resistance is not technophobia — it is a proportionate response to a genuine, documented risk. — Cerviçorn Consulting Survey, 2025

Tips for Organizations Before Deploying AI Smart Glasses

- Conduct a Privacy Impact Assessment before any workplace deployment

- Implement geofencing to automatically disable recording in bathrooms, break rooms, and confidential meeting spaces

- Require employees to disclose when the camera is active

- Use data processing agreements with any AI vendor accessing footage

- In many jurisdictions, these are not optional best practices — they are legal requirements

Source: Purdue Global Law School, Smart Glasses Privacy Risks, February 2026

Is Privacy Becoming a Luxury? The Uncomfortable Truth

There is a dimension to the AI smart glasses vs privacy conversation that rarely gets the attention it deserves.

A 2024 consumer survey found that 47% of potential users are concerned about data security and privacy risks linked to camera-enabled devices. But concern is not the same as protection.

The people who can afford to protect their privacy increasingly can — private schools with strict tech policies, premium legal advice, apps that minimize data collection, controlled environments without public cameras. People who cannot afford those layers have no real opt-out. They walk through shared spaces where someone nearby may be wearing a camera that sends footage to a server in another country, reviewed by an underpaid worker with no obligation to protect them.

Vogue’s analysis of surveillance and privilege captured this precisely: privacy is becoming a luxury good. The ability to move through the world without being recorded, analyzed, or identified is no longer a default condition for everyone. It is something you pay for, or you do not have it.

“When your data is captured by someone else’s glasses, you have no visibility, no access rights, and no ability to delete it. It’s surveillance with plausible deniability.” — Cybersecurity Advisors Network

AI Smart Glasses Brand Comparison: Privacy, Features, and Value

The market in 2026 is no longer just Meta. Google, Samsung, Rokid, Solos, and Apple are all either shipping or very close. Here is how the major players compare across the factors that matter most for privacy, usability, and value.

| Brand / Model | Price (USD) | Privacy Controls | AI Capability | Recording Indicator | Data Storage | Privacy Score | Overall Rating |

|---|---|---|---|---|---|---|---|

| Meta Ray-Ban Gen 2 | $299–$379 | Basic (LED light, can be covered) | ⭐⭐⭐⭐⭐ Meta AI, voice commands, live translation | LED (disableable) | Cloud by default, phone local option | ⭐⭐⭐ 6/10 | ⭐⭐⭐⭐ 8/10 |

| Meta Ray-Ban Display | $799 | Basic (same LED system) | ⭐⭐⭐⭐⭐ Full AR overlay + Meta AI | LED (disableable) | Cloud by default | ⭐⭐⭐ 5/10 | ⭐⭐⭐⭐ 8.5/10 |

| Google Android XR (2026) | TBA (est. $399–$599) | Strong (on-device processing planned) | ⭐⭐⭐⭐⭐ Gemini AI, Android XR platform | Hardware indicator (confirmed) | Local-first architecture | ⭐⭐⭐⭐ 7.5/10 | ⭐⭐⭐⭐ 8/10 |

| Samsung Galaxy Glasses | $379–$499 (est. July 2026) | Moderate (Galaxy AI ecosystem) | ⭐⭐⭐⭐ Galaxy AI, productivity focus | Physical LED planned | Samsung Cloud + local options | ⭐⭐⭐⭐ 7/10 | ⭐⭐⭐⭐ 7.5/10 |

| Rokid AI Glasses | $299–$399 | Strong (corner recording light, local option) | ⭐⭐⭐⭐ Display + AI assistant | Corner indicator light | Local-first, cloud optional | ⭐⭐⭐⭐ 8/10 | ⭐⭐⭐⭐ 7.5/10 |

| Solos AirGo 3 | $249 | Strong (no camera, audio only) | ⭐⭐⭐ ChatGPT-powered audio assistant | N/A (no camera) | Minimal — audio commands only | ⭐⭐⭐⭐⭐ 9.5/10 | ⭐⭐⭐ 7/10 |

| Apple Smart Glasses (2027) | TBA (est. $300–$500) | Expected strongest (Apple privacy brand) | ⭐⭐⭐⭐⭐ Siri AI, gesture recognition | Hardware indicator (planned) | On-device processing (Apple’s stated approach) | ⭐⭐⭐⭐⭐ 9/10 (projected) | TBA |

Sources: Treeview Smart Glasses Guide 2026 | Cybernews AI Glasses Review 2026 | VR.org AR Glasses Buyer’s Guide | UnboxFuture Samsung vs Meta 2026

🔍 MindtrixAI Verdict on Brands: Solos AirGo 3 wins on privacy because it has no camera. Rokid wins among camera-equipped glasses for its local-first approach and visible recording indicator. Meta leads on AI capability and market share but scores lowest on meaningful privacy controls. Google and Apple have the most potential to raise the bar — but only if they follow through on their stated architectures.

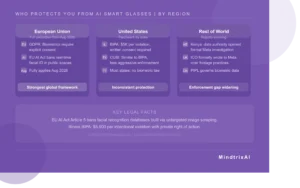

What Laws Currently Protect You? (By Region)

The legal picture varies significantly by geography and is moving fast. Here is where things stand as of mid-2026.

European Union: Strongest Global Framework

The EU AI Act entered into force on August 1, 2024, and fully applies from August 2, 2026. Article 5 bans AI systems that create facial recognition databases via untargeted image scraping, infer emotions in workplaces or educational settings, and categorize people by biometric data without appropriate legal grounds.

Under GDPR, biometric data is classified as “special category data.” Processing is generally prohibited unless the individual provides explicit consent. If someone in the EU uses AI glasses to identify you on the street, they are almost certainly breaking the law.

United States: A Patchwork by State

The Illinois Biometric Information Privacy Act (BIPA) requires written consent before collecting biometric data. Penalties reach $5,000 per intentional violation, and BIPA provides a private right of action. Texas and Washington have similar laws with different enforcement approaches. In 11 US states, all parties must consent to the recording of private conversations.

Most US states have no biometric law at all. If you live outside Illinois, Texas, or Washington, you have almost no legal protection from being silently identified through AI glasses in public.

⚖️ Legal fact: The EU AI Act Article 5 bans building facial recognition databases via untargeted image scraping. Illinois BIPA fines reach $5,000 per intentional violation with private right of action. Full EU enforcement begins August 2, 2026. — artificialintelligenceact.eu | Purdue Global Law School

What Reddit and Gen Z Are Really Saying

The academic and regulatory conversation is important. But the street-level reaction is sharper than most tech coverage acknowledges.

The Washington Post reported that Gen Z — a generation that grew up in an internet era defined by poor personal privacy — is at the forefront of a new backlash against smart glasses’ intrusion into everyday life. “It feels like a part of my life,” said one person interviewed, “to be constantly worried and thinking about my privacy or my data being stolen or used for bad reasons.”

From Reddit discussions, the range of opinion is wide but the emotion is real:

- “No I do not consent to being filmed or my kids being recorded by oddballs wearing spy camera glasses. This is a green light for voyeurism and perverts.”

- “As long as you are in a public place, you don’t have the presumption of privacy, and you can legally be filmed.”

- “In some countries, people are not allowed to record or even take photos of people. So there are going to be a lot of issues as these big companies start selling this type of product.”

- “If you see someone wearing them: ‘ARE THOSE THE SMART GLASSES THAT RECORD EVERYTHING?!’ As loud as you can.” — one user’s proposed social response

- “Functionally, the glasses are no different from someone walking around recording with their phone.”

🔍 MindtrixAI Finding: The Reddit and Gen Z reaction is not technophobia. It reflects a generation that has already had its privacy eroded by social media, location tracking, and data brokers. AI smart glasses do not represent a new threat to them — they represent the latest chapter in a longer story of normalised surveillance they were never asked to agree to.

What Are Companies Doing? (The Honest Assessment)

Some design choices do exist, and it is fair to acknowledge them while examining whether they are adequate.

Meta’s Ray-Ban glasses have the LED recording indicator. Meta’s spokesperson told The Washington Post the company’s Ray-Ban glasses feature a light that signals when recording is active, along with a sensor that detects when someone blocks the light — and that disabling the warning light violates Meta’s user agreement.

On-device AI processing is increasingly the standard for 2025–2026 devices, reducing data transmitted to the cloud. Apple’s Vision Pro features an EyeSight display that shows the wearer’s eyes to bystanders, signaling when they are in an immersive mode. Rokid’s glasses store footage locally by default.

The problems are not small: a recording light that can be covered, a consent flow buried in a setup screen, a privacy policy that mentions AI processing but not human review. These are technically compliant choices that offer practically no meaningful protection to bystanders.

“For this vision to materialize responsibly, a ‘trust-first framework’ emphasizing transparency, robust user controls, and adherence to social protocols and ethical design is paramount.” — Financial Content Markets, Meta AI Glasses Analysis, October 2025

What You Can Actually Do: A Consumer Action Guide

You cannot stop this technology from existing. But you can navigate it more deliberately.

If You Wear AI Smart Glasses

- Check and adjust your data sharing settings during initial setup, not after

- Tell people around you clearly when the camera is active

- Avoid wearing them in medical appointments, financial conversations, or intimate service settings

- Never leave them passively running in a room — especially in someone else’s private space

- Opt out of human data review and AI training where the settings allow

If Someone Near You Is Wearing Smart Glasses

- In private spaces like workplaces, you may have legal rights to object to being recorded

- If footage of you has been shared without consent, most platforms have non-consensual intimate imagery takedown mechanisms

- In EU countries, you have the right to request deletion of data captured about you under GDPR

- Remove yourself from facial recognition databases like PimEyes and FaceCheck ID directly

- Remove yourself from people-search engines like FastPeopleSearch and CheckThem

✅ Consumer checklist before wearing AI smart glasses in public:

1. Do you know whether your glasses are currently recording?

2. Do the people around you know?

3. Have you opted out of human review of your footage?

4. Do you know how long your voice recordings are stored?

5. Are you in a space where recording others is legally permitted?

If you cannot answer all five, you are carrying risk you may not have intended.

MindtrixAI Verdict: Where the Line Should Be

The debate around AI smart glasses vs privacy is often framed as innovation versus fear. That framing is not honest. The choice is not between progress and paranoia. It is between who benefits from the technology and who bears the cost.

Right now, the person wearing the glasses gets the benefit. The people around them bear the cost — the possibility of being recorded, identified, analyzed, and reviewed by strangers, without consent, without knowledge, and in most places without any legal recourse.

The line has three parts.

Product design has to make privacy protection genuinely impossible to circumvent, not optional. Recording indicators that cannot be disabled. Human review disclosed in plain language, not buried in policy documents. Explicit opt-in for AI training data, not opt-out after the fact.

Regulation needs to move at the speed of the technology. The EU AI Act is a start. The US needs equivalent frameworks — not eventually, but now, while the market is still forming.

Social norms have to do the work law cannot. Filming strangers without their knowledge is not illegal in most places. It is still wrong. The same instinct that stops most people photographing strangers in intimate moments needs to extend to AI glasses. The technology makes the violation quieter. That does not make it acceptable.

Hot Reads from MindtrixAI

- OpenClaw Review: The AI agent working 24/7 while you sleep — full honest breakdown

- AI SEO Statistics: The numbers quietly reshaping Google rankings

- Best AI Without Filter Chatbots: Uncensored AI tools compared and ranked

- AI Astrology Predictions: Evaluating accuracy of AI-powered astrology tools

- Cheaterbuster AI Review: In-depth analysis of accuracy and real-world effectiveness

FAQs About AI Smart Glasses and Privacy

Can AI smart glasses record without you knowing?

Can someone identify me from AI glasses in public?

Is recording someone with smart glasses illegal?

What happened with Meta and the Sama scandal?

What does the EU AI Act say about AI smart glasses?

Which AI smart glasses have the best privacy protection?

Can my employer require me to wear AI smart glasses at work?

Final Thoughts on AI Smart Glasses vs Privacy

The conversation around AI smart glasses vs privacy is not about whether the technology is impressive. It clearly is. The market hit $2.9 billion in 2025, 7 million pairs of Ray-Bans shipped in a single year, and the category is accelerating toward tens of millions of units by 2030. None of that makes the documented harms — strangers identified in seconds, intimate footage reviewed without consent, workers silenced for speaking up — acceptable side effects of progress.

The question every consumer, policymaker, and product team needs to answer is a simple one: whose convenience matters? Right now, the wearer gets the utility. The bystander takes the risk. Fixing that imbalance is not a technical problem. It is a choice. And the window to make it deliberately, before the market normalizes the current state — is closing faster than most people realize. The line exists. We just have to decide to draw it.

![How to Use Claude Code for Free [Claude AI Setup Without Subscription] Image-related-to-how-to-use-claude-code-for-free-claude-ai-setup-without-subscription](https://mindtrixai.com/wp-content/uploads/2026/04/How-to-Use-Claude-Code-for-Free-300x167.webp)